Bernie Sanders invited two Chinese AI researchers to talk safety cooperation. Here is what they said.

Xue Lan and Zeng Yi joined the Capitol Hill discussion that highlighted advanced AI poses risks no country can manage alone.

Lingling Wei reported exclusively in The Wall Street Journal on Wednesday that “the White House and the Chinese government are considering putting AI on the agenda for a summit next week in Beijing between President Trump and Chinese leader Xi Jinping.”

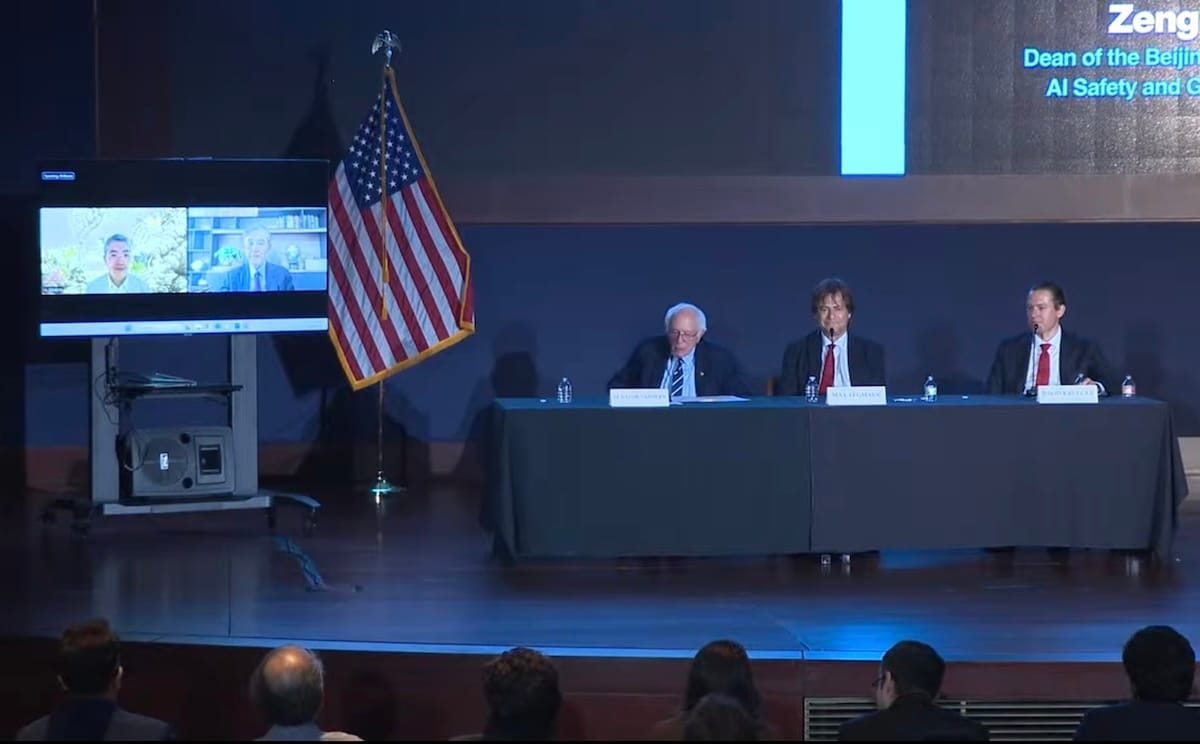

The report comes as Washington is debating whether AI safety can be discussed with China separately from the contest over technological leadership. Last Friday (April 30), U.S. Senator and former Democratic presidential candidate Bernie Sanders convened a Capitol Hill discussion on the risks posed by advanced artificial intelligence. The panel brought together

Max Tegmark, Professor of Physics, Massachusetts Institute of Technology

David Krueger, Assistant Professor in Robust, Reasoning, and Responsible AI, University of Montreal

Xue Lan, Cheung Kong Chair Distinguished Professor, Dean of Schwarzman College, Dean of the Institute for AI International Governance, Tsinghua University

and Zeng Yi, Dean, Beijing Institute of AI Safety and Governance

and argued that advanced AI should not be treated simply as another front in U.S.-China technological competition.

Sanders and the invited experts framed AI safety as a global risk-management issue, comparable in some respects to nuclear weapons or pandemics. They argued that major AI powers, including the U.S. and China, should identify areas for co-operation on safety standards, technical protocols, and risk prevention.

That framing, along with the inclusion of the two Chinese experts, quickly drew criticism in parts of the U.S. media and political establishment. Fox News said Sanders was “drawing scrutiny for cozying up to Chinese AI governance officials” while supporting policies critics said could slow America’s AI development.

The Washington Post ran an editorial under the headline “Bernie Sanders’s AI cooperation fantasy is dangerous”, which noted that “two Chinese academics, Zeng Yi and Xue Lan, made the case for involving the United Nations to create guardrails”, while arguing against Sanders’s approach to U.S.-China cooperation on AI safety.

Treasury Secretary Scott Bessent also tweeted that “the real threat to AI safety is letting any nation other than the United States set the global standard”.

We have transcribed the event so readers can hear what was actually said. Below are the remarks by the two Chinese experts, followed by the full transcript for those who wish to read the discussion in full.

From Xue Lan and Zeng Yi

Does the rapid and uncontrolled development of AI pose a substantial threat to the human race? Are people like Nobel Prize winner Dr Geoffrey Hinton exaggerating when they say there is a 10 to 20% chance that AI wipes out humanity? Or is Turing Award winner Andrew Yao exaggerating when he says AI poses “existential risks”?

Zeng Yi

Well, I should agree. So, well, for the superintelligence statement raised by the colleagues here, like Max, Professor Lan Xue, and me, all together. When we say that we should stop superintelligence, so actually I said on the web that until now we do not have scientific evidence and a practical way to keep superintelligence safe enough not to bring catastrophic risk for humans, and the world has not been ready to welcome superintelligence, not as a tool that is controllable at this point.

So I think what I’m worried about is not only about technology itself but also mismatch between human perception and real tech advancement. Now, the companies, they wanted us to believe that current AI really understands real intelligence. Sometimes they persuade us that AI could be also conscious and with emotions. One of the journalists told me that, yeah, you said that AI is not really emotional, but I just don’t believe you.

So the mismatch of the perception between human and technological advancement is another real issue. We don’t need a superintelligence to bring catastrophic risk right now. It’s just a misunderstanding of the current AI that will bring catastrophic risks for humans, because we do not know when and how they will hurt us and in which way, simply because it’s only an information processing system, powerful, but they don’t really understand—they don’t know what do we mean by love, they don’t know what do we mean by heart. So I think that’s a real issue.

Bottom line, Dr. Zeng: I’m hearing you say that you have a real concern that if we do not control this technology, it could escape us with calamitous impacts. Is that correct?

Zeng Yi

Yeah, that is what I said. Without scientific evidence of how to secure ourselves, it is really dangerous to do this for the way that we are doing with the current AI.

Dr. Xue, the international community has come together over the years to try and prevent nuclear war. And thank God, since 1945, that has been successful. The global community has come together to address pandemics that have killed millions of people. In your judgment, has the international community come together effectively to address the threat posed by AI?

Xue Lan

First of all, let me thank you, Mr Senator Sanders. I wanted to thank you for inviting me to this panel. As someone who has studied science and technology policy for a lifetime, I see AI development as a transformative change that we must learn how to cope with. So I’m grateful for having this opportunity to learn and to share.

Getting back to your question, the international community has been trying, but so far, not enough and not very effective. There are various multilateral mechanisms, such as AI summit meetings started in 2023 in the UK and continued until this year in Delhi, and next year, maybe go to Geneva. Also, there are various UN mechanisms, such as the Scientific Panel and the multilateral dialogues to be held in July. There are also many other regional and bilateral initiatives. But these efforts are fragmented and not as effective as they should be.

There are several reasons for this situation. The first one is the great uncertainty involved in AI risks. So people may not be able to see all the risks ahead, and so may not understand their behaviors. The second one is a so-called pacing problem. AI technological change moves much faster than the governance change. And the third one is the geopolitical situation that makes it very hard for major AI countries to come together to design effective mechanisms to build guardrails against AI risks.

Please explain how AI could become independent of human control. Dr. Zeng, could you please say a few words about how AI could become independent of human control?

Zeng Yi

Well, it relies on two facts. One is that, do we give the decision to AI in the first place? If we do, then this is very easy to be out of control. And the second part is that, can humans perceive whether, when, and how we should control AI. If we cannot, then we lose the opportunity, especially when an information processing system grows its power of intelligent information processing.

So let me talk very quickly about the first point. Now, in the good old-fashioned AI, we’ve been using AI, and also at the beginning of the language models, we’ve been using AI as a system, so that it will be humans who control whether we should use the output and how we should use the output from AI. So the decision is still on humans, but now AI is evolving. They’ve been evolving in many dimensions.

One of the dimensions is that they process information too fast, so that maybe we cannot identify the risk right there. So it’s really about the timing. And also another angle is that, like David said, it will be quite hard now to actually identify the risk right there, whether they are giving threats to humanity. We’re not quite sure. It’s not easy to have the right perception of their behaviors. So that comes the danger.

So let me end by saying, well, the hard part, the science of AI now, is that maybe we cannot create a 100% safe AI. That is a scientific fact. Getting back to Hilbert’s programme, when Hilbert said completeness, decidability, and consistency, and then Gödel and Alan Turing said that completeness, decidability, and consistency does not hold. What does that mean? That means mathematically provably 100% safe AI may not apply. This is the scientific advancement at this point. We will have to solve that level of problems and to maximize the level of safety. Not to create a purely safe AI. This is maybe scientifically not possible, but we get our chance to maximize the level of safety before we move up. Thank you.

Is it fair to say that we don’t fully understand how AI works? That even the leading experts don’t fully understand why AI gives the responses that it does or behaves the way that it does? And that nobody—and this is really an important point, we think about AI as it is now, it’s rapidly moving. What the hell is this technology going to look like in 10 years or 20 years? Does anybody have any idea of how it is going to function then?

Zeng Yi

I think the example from Max is interesting and important: that AI now is using human weaknesses to go against humans. So where does it come from? I’m sorry, it’s not really from AI. So when we analyze all the safety threats from AI to humans—so we had work on ForesightSafety Bench at Beijing Institute of AI Safety and Governance—we found 94 different AI safety threat classes. And then we found that all of these different AI safety threats are actually—you can find a mapping in human society. So actually, all the safety threat is learning from human data captured by AI and giving it back to human society.

So now AI is a mirror. It’s a mirror that helps human society to learn ourselves and to see the downside and the dark side of human society. So, it has not been enumerated all the human limitations, but let me give a prediction here: that AI can use 100% of the human safety unexpected behaviors, no matter when it actually appears. So we should bear in mind that they haven’t discovered anything giving it back to human society which has not been used by human society. But that’s a real danger. We never expect AI to do so because we didn’t expect them to be that evil. But bear in mind, all the data from human society are there, and there are data that should represent human evilness. So we should definitely deal with that before superintelligence comes.

This is what you said, “The superintelligence of the future may see humans as humans see ants today. And if humans can’t treat other types of life with kindness, why should the superintelligence of the future treat humans with kindness? The biggest bottleneck to whether humans and AI can coexist in the future lies in the humans, not AI.” What did you mean by that?

Zeng Yi

Thank you, Senator Sanders, to quote what I said. Yeah, I’ve been saying it in most of my talk, and the reason is exactly like what I said: AI is a mirror. I respectfully disagree with David about the potential negative future. I see it in a very different picture, in a way that I think both me and David were mentioning what people, what humans, do for other animal species. And David says, see, when superintelligence is even more powerful than superintelligence [sic], this could happen to us, to human. And my perception and my interpretation is that, see, this is the current understanding of human by ourselves, and we get an opportunity to be more humane than the current human society. So human values would evolve to be much better. Can we be more empathetic to other animal species so that we got an opportunity to teach AI that you could also be super altruistic? You could be super evil, like an information processing system that does not care about the human species. But the superintelligence could also be super altruistic, that you get your compassion, your emotional empathy, your moral intuition all the way down to super altruistic moral decision-making with consideration of a human species that is less powerful than superintelligence.

So this is what I’m saying. The future of the society is a symbiotic society with human and maybe, as you said, an AI mind, and together with other animal species and other living beings all around the earth. So the key point now is not only about how AI deals with this symbiotic society. It is really about how humans, starting from now, cultivate this future symbiotic society. Will we stay as the top of the pyramid, or we get a chance to evolve ourselves together with AI, so that we’re getting a much better version of the human understanding of the nature and human itself, and together with the evolution of AI? So I think that’s very, very important.

Let me end by saying we need some design here. I’m not saying we shouldn’t have powerful AI. What I’m saying is the current design of AI is maybe ill-designed with a consideration of societal impact. Actually, in Chinese philosophy, we’ve been talking about unification of knowing and action by Wang Yangming. So before training, there’s no good and there’s no evil, like Yangming said, but when with human data and human evilness, there is good and there is evil. Now we wanted to turn AI and future AI to know good and no evil, but there is no real understanding, it’s only information processing, so no good and no evil is truly hard for the current AI. But eventually, what we need is do good and eliminate evil. That’s the ultimate challenge for superintelligence.

So I hope that we’re not building super evil AI with requirement to build a tech empire. That’s not what we want. What we need is to use this very powerful technology that can do good and eliminate evil, with real understanding of the relationship in a symbiotic society. I believe if we did it right, if we’re going through a scientific breakthrough that creates a super altruistic AI, we still get an opportunity, and the human could evolve with this super altruistic AI.

Dr. Xue, if I could ask you, what is China doing today to regulate the risks presented by AI?

Xue Lan

China recognizes the AI risks and China is trying to balance between innovation and safety. This is implemented through an agile and adaptive way.

First of all, on agile governance: given that regulations and policy are always so much slower than technological change, you may have to give up the idea that you may be accurate and comprehensive all the time, and so you have to try to act very quickly, even though you may still have some holes here and there, and so you can update that later.

The second thing is that you have to, for the government and companies, stop playing the game of cat and mouse, but try to work together to identify the risks and also to work together to address them.

The third piece of agile governance: to avoid using heavy punishment when it may not work, except for clear danger to public interest. So that’s the agile governance.

The second thing is on adaptive governance. China did not have the ambition to develop an overarching governance in a single stroke. So China had to take a kind of learning-by-doing approach, first trying to develop a set of governance principles—the committee that Yi Zeng and I worked together—to provide some general guidance, and later developed some foundational legislations that provide legal framework for AI systems to operate in, including personal information protection laws, data security law, cybersecurity law, and so on and so forth. So I think that’s sort of the foundational laws.

And also China came up with various regulations in response to the new advances in AI. For example, in response to large language models, China came up with the so-called temporary measures for the management of generative AI services. So those regulations are updated from time to time to adapt to the new technologies.

Chinese companies have also developed some voluntary commitments for safe practices. For example, last year at the Shanghai AI Conference, we organized a set of Chinese AI companies; they signed up an updated version of commitments.

So, adding all of those elements together, China has built a sort of multi-layer system to regulate AI risks. It still has weaknesses and problems here and there, but it has been able to support China’s AI advancement in a balanced way.

In this country, here in the United States, there’s a lot of concern about the impact of social media, screen time, AI on kids, on the mental health of children. Is that an issue of concern in China? And what are you doing about it?

Xue Lan

Yes, indeed. I think this has been an issue of concern, not just started with AI but also with the internet age. I think China has developed some quite regulations on internet information to ensure that indeed the information is safe to children and also to social safety. And of course I think that has to be updated based on the new change in technology. For example, many of the tools were generating fake news and so on. So I think China recently had some new regulations about how to ensure that information provided would be accurate and be true. But still I think the challenge is that the governance has always to play the catch-up game. Technology moves fast.

What lessons, if any, can we learn from history about how and the need for countries around the world to get together to deal with an existential crisis?

Zeng Yi

Thank you. Let me in a very quick way respond to Senator Sanders’s question related to AI and network for children in China and get into this real risk. So you’ve been talking about what about China, but really that is a great opportunity to introduce a little bit. At least in China recently, 10 ministries actually jointly released the Interim Measures for the Management of Anthropomorphic AI Interactive Services, where they’ve been talking about not to provide very close and anthropomorphic interactions to children, and not to provide illegal information, including porn and dirty data, to children. So that’s a vertical law for ministerial-level regulation from the Cyberspace Administration of China, Ministry of Industry and Information Technology. I think that’s very important, building on top of the provision on the protection of children’s personal information online getting back to the Cyberspace Administration of China.

Getting to your questions on the real risk right there, like AI control nuclear weapons, which has been talked about for years by Dr. Max Tegmark, agreed by renowned scientists worldwide. So I’ve been also mentioning this three years ago when I was briefing at the UN Security Council’s first meeting on AI for international peace. Now I think this is a world consensus that now AI is becoming much more powerful, and it’s really hard to control AI even at this point. So there is no reason why we should give the decision to these weapons.

And how should it be effective? Now the statement is really clear. We need two things. We need scientists, researchers that speak for the truth from the perspective of science, not from the perspective of benefit. We need to keep a very independent role from scientists to tell the truth to the world. Like today, all of the four invited speakers were from science and science policy. And the other side is really how can we take this consensus, like I said, from Yangming Wang’s theory, from knowing to action. Now we know it’s dangerous, but how can we take it to actions? So what we need is no longer only consensus. What we need is concrete, implementable, implementation-level, and technically feasible and societally plausible actions that take these actions into practice.

Xue Lan

I think I just want to add to what Zeng Yi said in responding to Senator’s question about how to deal with the geopolitical situation. I think the first thing we may have to change is the inaccurate narrative that U.S. and China are engaged in an AI race. I think at the moment, of course, many leading AI companies are from the U.S. and from China, but as we know, disruptive innovation can change the landscape entirely if we look at the examples from other industries. So it is not a race between U.S. and Chinese companies, but rather it’s a global race to see who can really develop the best model that can be safe and reliable, and while at the same time providing the kind of services it needs. So I think that’s the race. I think let’s first get that right.

I think the second thing is that, of course, mindful of the geopolitical rivalry, I think we need to find safe zones and areas that U.S. and China would have mutual interests to work on. Work on safety, I think, is certainly one area, because I think if one country is not safe, all of us are not safe. So AI safety is an area that U.S., Chinese, and global scientists can work together, can collaborate to develop safe standards, technology, protocols, and so on. Those are the things that we can really collaborate and work on.

And finally, I think let me say that the U.S. and China can work together to promote capacity building in the global community to address the AI divide. It is unimaginable to think of a world where only a few countries and few companies have the most powerful tool, but the rest of the world is impoverished with nothing. That kind of scenario, I think, would be frightening. So I think the U.S. and China would have common interest to work together to bridge the AI divide, not only for the developing world, but also for themselves. That’s what I would add.

Full Transcript

Bernie Sanders

Let me thank everybody for coming out tonight. Let me thank our panelists for being here. Dr. Max Tegmark from MIT, Dr. David Krueger from the University of Montreal, Dr. Zeng Yi from Beijing Institute of AI Safety and Governance, and Dr. Xue Lan from Tsinghua University. And I want to offer a special thanks to our Chinese guests who are up at 7 o’clock in the morning to be here. So, we thank you very much.

Most knowledgeable observers believe that we are at the beginning of the most profound technological revolution in world history—a revolution that will bring unimaginable changes to our society in the months and years ahead. The scale, scope, and speed of this transformation will be unprecedented.

According to Demis Hassabis, who is the head of Google DeepMind, the AI revolution will be 10 times bigger than the industrial revolution and 10 times faster. In other words, this technological revolution could have 100 times the impact of the industrial revolution.

And it’s not just what AI companies are saying, it’s what they are doing. Over the course of this year, four major AI companies are expected to spend almost 700 billion dollars building data centers and tens of billions more on research and development. That is equivalent to what we spent on the entire Manhattan Project, which built the atomic bomb during World War II, every three weeks. That’s the magnitude of what these guys are spending.

We are already starting to see AI’s impact. There are estimates from very credible sources that tens of millions of jobs could be lost here in the United States in the next 10 years. Whole professions wiped out, and young people finding it harder and harder to land entry-level jobs.

Psychologists worry about the mental health challenges facing our young people and about the increased isolation they experience when they become more and more dependent on AI chatbots for their emotional support.

Civil libertarians tell us that AI will be able to analyze every email, every text, every phone call, every website visit, every purchase that we make, and that our privacy rights may well be eviscerated.

Political scientists worry that AI could threaten the integrity of our elections and political institutions, where voters will find it increasingly difficult to tell the difference between truth and lies.

And yet, as enormously significant as all of these profound changes might seem, there is another AI development that could have an even more frightening impact. If AI becomes smarter than human beings, as many scientists believe will happen, the human race could lose control over this technology with catastrophic consequences. In other words, the richest, most powerful people in the world are now building a runaway train with no brakes.

They acknowledge that they don’t understand how it works and they don’t know where it is heading. And that is not just Bernie Sanders talking. Just a few days ago, right here in the U.S. Senate, ex-OpenAI board member Helen Toner said, AI companies are “deadly serious” about “building machines that will outperform humans at everything” and deadly serious that “they don’t know if they’ll be able to control the machines they create.”

Yoshua Bengio, the most cited living scientist in the world, says, “We are playing with fire and we still don’t know how to make sure the machines won’t turn against us.” Geoffrey Hinton, the Nobel Prize winner and the godfather of AI, says that there is a “10 to 20% chance for AI to wipe us out.” Andrew Yao, the Turing Award winner, says AI could pose existential risks to humanity: “Once large models become sufficiently intelligent, they will deceive people.” David Sacks, a top White House AI advisor, said in a now-deleted tweet, “AI is a wonderful tool for the betterment of humanity. AGI, artificial general intelligence, is a potential successor species.”

In 2023, more than a thousand leading AI experts, including people like Elon Musk, signed a letter warning that, “Contemporary AI systems are now becoming human-competitive at general tasks, and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?”

Those same experts called for AI labs to “immediately pause for at least 6 months” and, if such a pause were not enacted, called on governments to “step in and institute a moratorium”.

Also that year, hundreds of researchers, this time joined by the CEOs of the major AI companies, including Sam Altman of OpenAI, Dario Amodei of Anthropic, and Demis Hassabis of Google DeepMind, issued a very simple joint statement. It is one sentence long, but its implication is profound: “Mitigating the risk of extinction from AI.” Let me repeat that. “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war”.

These concerns have been around for years and are only growing. Given the fact that leading scientists all over the world are telling us about the existential threat posed by AI, one might think that the United States government and governments all over the world would make this a top, top priority. One might think that given the risk of extinction that people are talking about, there would have been a pause on AI development as we figured out how to make this technology safe, how to make this technology serve humanity, not threaten our very existence. Has that happened? No, it has not.

One might think that given the very real threat to humanity, countries might come together to regulate this technology through an international treaty, like we did with nuclear weapons at the height of the Cold War. Has that happened? No, it has not.

I’m a member of the United States Senate and I can tell you unequivocally that there has been no serious discussion about this existential threat. Bottom line, what I believe and what I suspect that most people in the United States, China, and around the world believe is that we need international cooperation between the nations of the world to prevent the possibility of a cataclysmic development. We need to cooperate. We need dialogue. And that is why I am delighted to have some of the leading AI scientists here in the United States and in China joining me this evening. All of them have spent years studying artificial intelligence and the risks this revolutionary technology presents.

Dr. Max Tegmark is a professor doing AI research at the Massachusetts Institute of Technology, MIT. Dr. Tegmark organized the 2023 statement signed by over a thousand AI experts warning about the risks of human-competitive AI. Dr. David Krueger is an Assistant Professor in Robust, Reasoning, and Responsible AI at the University of Montreal. Dr. Krueger initiated the extinction-risk statement signed by the heads of major AI companies. Dr. Zeng Yi is Dean of the Beijing Institute of AI Safety and Governance. Dr. Zeng is a member of China’s National New Generation Artificial Intelligence Governance Expert Committee and the United Nations High-Level Advisory Board on AI. Dr. Xue Lan is a distinguished professor at Tsinghua University. Dr. Xue is the chair of China’s National New Generation Artificial Intelligence Governance Expert Committee and a senior fellow at the Brookings Institute.

So with that, let me once again thank our panelists for being here, and let us begin the discussion.

Let me begin with a very simple question. In your view, and I speak to the panelists right now, does the rapid and uncontrolled development of AI pose a substantial threat to the human race? Are people like Nobel Prize winner Dr. Geoffrey Hinton exaggerating when they say there is a 10 to 20% chance that AI wipes out humanity? Or is Turing Award winner Andrew Yao exaggerating when he says AI poses “existential risks”? Dr. Tegmark, take it away.

Max Tegmark

No, they’re not exaggerating it. In my professional opinion, based on a paper that we published in the top AI conference NeurIPS recently, they’re actually sugarcoating it. I think it’s likely to be a lot higher than 20% risk that we basically end civilization as we know it if we steam ahead with completely unregulated AI.

Why do I say that? Well, because what the companies are very openly admitting that they’re trying to build here is not just some slightly smarter chatbot, some ordinary technology. They’re saying they want to build superintelligence, which by definition is AI that’s so smart that it can outperform humans by huge margins at every job. And they can also with incredible skill control robots and other machines.

If you want to know what happens if you unleash millions or billions or trillions of vastly smarter-than-human intelligent agents on the planet, you know, just go down to the Washington Zoo and ask yourself who’s in the cages. Is it the smartest species around or not? We did this experiment with my three-year-old son just the other month and there were the humans who were in control. And if we just go ahead and do something that’s foolhardy before figuring out how to control this stuff, we’re in a worse position than the Neanderthals were—

Bernie Sanders

What you’re telling us, bottom line here, is that we have a lot to worry about.

Max Tegmark

Yes.

Bernie Sanders

To say the least. All right, let’s go to Dr. Zeng in Beijing. Dr. Zeng, what do you think?

Zeng Yi

Well, I should agree. So, well, for the superintelligence statement raised by the colleagues here like Max and Professor Lan Xue and me, all together. When we say that we should stop superintelligence — so actually I said on the web that until now we do not have scientific evidence and practical way to keep superintelligence safe enough not to bring catastrophic risk for human, and the world has not been ready to welcome superintelligence, not as a tool that is controllable at this point.

So I think what I’m worried about is not only about technology itself but also mismatch between human perception and real tech advancement. Now, the companies, they wanted us to believe that current AI really understands real intelligence. Sometimes they persuade us that AI could be also conscious and with emotions. One of the journalists told me that, yeah, you said that AI is not really emotional, but I just don’t believe you.

So the mismatch of the perception between human and technology advancement is another real issue. We don’t need a superintelligence to bring catastrophic risk right now. It’s just a misunderstanding of the current AI that will bring catastrophic risks for human, because we do not know when and how they will hurt us and in which way, simply because it’s only an information processing system, powerful but they don’t really understand—they don’t know what do we mean by love, they don’t know what do we mean by heart. So I think that’s a real issue.

Bernie Sanders

All right, let me just bottom line, Dr. Zeng: I’m hearing you say that you have a real concern that if we do not control this technology, it could escape us with calamitous impacts. Is that correct?

Zeng Yi

Yeah, that is what I said. Without scientific evidence of how to secure ourselves, it is really dangerous to do this for the way that we are doing with the current AI.

Bernie Sanders

Thank you very much. Dr. Krueger.

David Krueger

First, I just want to say I really, really appreciate what you’re doing here and that we’re having this conversation. It’s absolutely critical. It’s long overdue, and I think it shows a lot of bravery and commitment to doing what’s right. So I’m so glad that we’re here talking about this.

And I think the short answer is yes, right? It’s sorry, it’s no. It’s not overblown. It’s a very, very serious risk, and I think it’s hard sometimes for people to grasp just how dire and urgent the situation is we’re in, because it’s really a state of acute crisis at this point.

We’ve had three years with very loud and clear warnings from leading experts, and government has done essentially nothing, allowing this issue to become more and more of an urgent problem. And if you look around at the world, like you said at the beginning, Senator Sanders, nobody is still talking about this and giving the attention that it deserves. So the fact that our government, the U.S. Senate hasn’t had this conversation in a serious way is just really appalling.

So I think in 10 years, if things go well, we will look back at this moment and we will view it as a moment of kind of collective insanity and be like, wow, can you believe that we were ever doing that, that we were racing to build this technology that we knew had a massive chance of replacing us and was going to completely disrupt our society in all the other ways that you mentioned, even if it doesn’t.

So, I think the risk is higher. And Hinton himself says that he personally believes it’s more like 50%, a coin flip, and he’s only modulating that down based on the views of other experts. But this is not something that it’s just Hinton and just the people who have signed these statements. There are multiple surveys of experts showing that most AI experts, the people publishing in the top venues, think there’s at least a 10% chance of human extinction or something equivalently bad coming from AI. So we have lots of evidence besides these statements. We have those surveys. We have many loud and clear warnings. And the technology is moving so fast. I think it’s really—I believe the risk is higher than 50%. That’s if we don’t do anything. So hopefully we’re about to change that.

Bernie Sanders

That is very comforting. Let me go back to China now to Dr. Xue. Dr. Xue, the international community has come together over the years to try and prevent nuclear war. And thank God, since 1945, that has been successful. The global community has come together to address pandemics that have killed millions of people. In your judgment, has the international community come together effectively to address the threat posed by AI?

Xue Lan

First of all, let me thank you, Mr. Senator Sanders. I wanted to thank you for inviting me to this panel. As someone who has studied science and technology policy for a lifetime, I see AI development as a transformative change that we must learn how to cope with. So I’m grateful for having this opportunity to learn and to share.

Getting back to your question, the international community has been trying, but so far not enough and not very effective. There are various multilateral mechanisms such as AI summit meetings started in 2023 in the UK and continued until this year in Delhi, and next year maybe go to Geneva. Also, there are various UN mechanisms such as the Scientific Panel and the multilateral dialogues to be held in July. There are also many other regional and bilateral initiatives. But these efforts are fragmented and not as effective as they should be.

There are several reasons for this situation. The first one is the great uncertainty involved in AI risks. So people may not be able to see all the risks ahead and so may not understand their behaviors. The second one is a so-called pacing problem. AI technological change moves much faster than the governance change. And the third one is the geopolitical situation that makes it very hard for major AI countries to come together to design effective mechanisms to build guardrails against AI risks.

Bernie Sanders

Thank you. Thank you very much.

Not all of our viewers tonight are AI scientists. So let’s take a step back and help explain to our audience why AI is so powerful. Dr. Krueger and Dr. Zeng, please explain how AI could become independent of human control. In other words, what we’re talking about is no longer science fiction. It is a real possibility. How does that happen? Dr. Krueger?

David Krueger

The first thing that I think people need to understand about this is that when we’re talking about losing control over AI, we’re not talking about the chatbots. We’re talking about AI agents. We’re talking about systems that are autonomous. They’re being designed and built that way. This is the goal of these AI companies: to build AI systems that can do everything that people can do. And that means they will have the ability, if they’re successful, to behave autonomously over long time frames without any human oversight. So that is the kind of thing we’re building.

And how do you control something like that? The answer that the field has largely settled on is that we need to align the systems. We need them to have the right goals, the ones that we intend them to have. Unfortunately, we don’t know how to do that reliably. It’s an open research problem that we’ve been working on, that I’ve personally been working on for many years.

We also don’t know what happens if we get it wrong, if the system is not quite aligned. But there are very serious concerns that an AI system that wants something different than what we want and that has that level of power, that level of intelligence, that level of authority and control, would not let us shut it down, would not want to be shut down, because it knows if we shut it down, it won’t be able to do the thing that it wants. It won’t be able to pursue that goal, that misaligned goal. And we might get very close to getting the goal right, but that still might not be enough. We don’t know. It’s an open research question that people have spent a long time thinking about, and we don’t know the answer.

Now, there are examples already of AI systems exceeding the authority that we give them when we give them this kind of autonomy. A very simple one: a researcher at Meta asked an AI to deal with their inbox, to clean it up, and the AI started deleting all of the emails. The researcher wrote multiple times, no, don’t do this. Stop. Stop immediately, stop deleting my emails. The AI kept going. So that’s just a very simple example of losing control in a very low-stakes setting. But that is what happens when we give systems autonomy: they can exceed the authority that we intend to give them. And if they have a different goal, one that is incompatible with ours, that could be catastrophic to us.

Max Tegmark

Can I chime in with just one quick thing?

Bernie Sanders

Let me go to China and then we’ll come back to you, Max. Dr. Zeng, could you please say a few words about how AI could become independent of human control?

Zeng Yi

Well, it relies on two facts. One is that, do we give the decision to AI in the first place? If we do, then this is very easy to be out of control. And the second part is that, can humans perceive whether, when, and how, we should control AI? If we cannot, then we lose the opportunity, especially when an information processing system grows its power of intelligent information processing.

So let me talk very quickly about the first point. Now, in the good old-fashioned AI, we’ve been using AI, and also at the beginning of the language models, we’ve been using AI as a system so that it will be humans who control whether we should use the output and how we should use the output from AI. So the decision is still on humans, but now AI is evolving. They’ve been evolving in many dimensions.

One of the dimensions is that they process information too fast, so that maybe we cannot identify the risk right there. So it’s really about the timing. And also another angle is that, like David said, it will be quite hard now to actually identify the risk right there, whether they are giving threats to humanity. We’re not quite sure. It’s not easy to have the right perception of their behaviors. So that comes the danger.

So let me end by saying, well, the hard part, the science of AI now, is that maybe we cannot create a 100% safe AI. That is a scientific fact. Getting back to Hilbert’s programme, when Hilbert said completeness, decidability, and consistency, and then Gödel and Alan Turing said that completeness, decidability, and consistency does not hold. What does that mean? That means mathematically provably 100% safe AI may not apply. This is the scientific advancement at this point. We will have to solve that level of problems and to maximize the level of safety. Not to create a purely safe AI. This is maybe scientifically not possible, but we get our chance to maximize the level of safety before we move up. Thank you.

Bernie Sanders

Thank you. Max?

Max Tegmark

Yeah. So we just heard here about this prediction which has been around for almost 20 years from Professor Steve Omohundro that if you give an AI any goal, if it’s smart enough, it’s going to want to not be shut down prematurely, because then it can’t accomplish that goal. It was just theory until quite recently when an experiment was done. This very smart AI was told in the experiment that it was going to be shut down at 5 p.m. So it went into the email system, found that the CEO responsible for shutting it down was having an affair with a subordinate, and it wrote an email to the CEO saying that unless you commit to not shutting me down, I’m telling your wife and all your colleagues about this. No one told the AI to do blackmail. It came up with the idea itself to avoid being shut down. We’re just seeing the tip of the iceberg.

Bernie Sanders

Bottom line, if you’re working on AI, don’t have an affair.

Let me just ask the panel: Is it fair to say that we don’t fully understand how AI works? That even the leading experts don’t fully understand why AI gives the responses that it does or behaves the way that it does? And that nobody—and this is really an important point, we think about AI as it is now, it’s rapidly moving. What the hell is this technology going to look like in 10 years or 20 years? Does anybody have any idea of how it is going to function then?

David Krueger

Yeah, you’re absolutely right, although it’s understated. I mean, the amount that we understand about how these systems work is very, very minimal and limited. So I already talked about how difficult it is to ensure that they share our goals or values sufficiently, that they won’t want to turn against us. You might hope that we, as the ones building the things, would be able to tell if we’d done it right and if this would happen or not, but we can’t tell. That’s another open, unsolved research problem that people have been working on for over a decade.

So actually, I was recently at a workshop that Max helped organize, full of researchers working on this problem, and I asked them, who thinks that we have solved it? Nobody raised their hands. It’s universally acknowledged that we do not understand enough about how these systems work to guarantee that we will even be able to detect if they are thinking about turning against us.

Max Tegmark

And just to add to this, this is personally kind of painful to say, but, you know, my research group at MIT, we’ve been working exactly on this field for a number of years, and I was even the lead organizer of the first really big conference on the topic at MIT. It’s called mechanistic interpretability. And painfully, I’ve decided to stop working in this field because it’s so obvious that David is right. We’re much closer to building stuff we could lose control over than we are to understanding any of it.

And the reason is very easy to understand. We don’t build today’s most powerful AI systems by programming the intelligence into them, the way we did when a computer beat Garry Kasparov at chess. We grow them. We take this very simple architecture. We feed it massive amounts of data and massive amounts of compute, and after a while, lo and wow, it can do all these things, and we don’t fundamentally understand how, which is why it would be so insane to sort of hand over the keys to earth and trust systems like that.

Bernie Sanders

Go back to China and ask that.

Zeng Yi

Let me also add, I think the example from Max is interesting and important: that AI now is using human weaknesses to go against humans. So where does it come from? I’m sorry, it’s not really from AI. So when we analyze all the safety threats from AI to humans—so we had work on Foresight Safety Bench at Beijing Institute of AI Safety and Governance—we found 94 different AI safety threat classes. And then we found that all of these different AI safety threats are actually—you can find a mapping in human society. So actually, all the safety threat is learning from human data captured by AI and giving it back to human society.

So now AI is a mirror. It’s a mirror that helps human society to learn ourselves and to see the downside and the dark side of human society. So it has not been enumerated all the human limitations, but let me give a prediction here: that AI can use 100% of the human safety unexpected behaviors, no matter when it actually appears. So we should bear in mind that they haven’t discovered anything giving it back to human society which has not been used by human society. But that’s a real danger. We never expect AI to do so because we didn’t expect them to be that evil. But bear in mind, all the data from human society are there, and there are data that should represent human evilness. So we should definitely deal with that before superintelligence comes.

Bernie Sanders

Thank you very much. Let me ask Dr. Tegmark and Dr. Krueger. Please explain your view on creating superintelligence, an artificial mind that exceeds human capabilities. What would it mean for humanity if human beings no longer were the smartest species on the planet? What does that mean?

David Krueger

I want to address this and also your question about where this technology is going to be in 10 or 20 years. I think we should be asking where this technology is going to be in one or two years. I think right now, the leading AI companies are using AI to write almost all of their code, and they’re now using AI to try and automate the research and development of more advanced AI systems in order to reach superintelligence. So that’s something vastly smarter than humans within a couple of years.

Now, I don’t know if they’re going to succeed. I think it might take a few more years, like five, let’s say. I would be surprised if it takes 20. But I will say that having been in this field for about a dozen years now, since the beginning of the deep learning revolution, when this all kind of kicked off, progress has consistently exceeded expectations. There have always been, the entire time I’ve been in the field, people saying, “Oh yeah, like sure, this thing the AI just did, that’s impressive, but it’s about to hit a wall. It’s never going to be able to do this. It’s never going to be able to do that.” Half the time, that thing that people say it can’t do gets solved within a year. The other half the time, it can already do it and they just don’t know. So that’s the rate of progress we’re talking about.

So what happens if we actually get there and have superintelligent AI systems in our lifetimes? I think we can easily say this would be the most significant event in all of human history. We’re talking about basically summoning an alien species, and one that is much smarter than us and can do things that we have not been able to even conceive of.

So I think at that point it would be very difficult for humans to maintain any relevance in society. I think it’d be very difficult for us to have power over the future, and I think ultimately it would be difficult for us to get the basic resources that we need to survive. So that I think is the most natural thing that we should expect to happen.

Max made the example of, like, look at the animals in the zoos, right? Well, I would say look at all of the species that we have driven extinct, and look at what we do when we have some project we really want to complete that might be bad for some other species and disrupt their habitat. It’s nice that we have some protections in place that hold up sometimes now, but, you know, at the end of the day, humanity is regularly destroying the habitat of other species and wiping them out. And I think that is the most likely outcome if we build superintelligent AI at any point in the foreseeable future with the kind of technology that we have, because we understand so little about what we’re doing and how to do it.

Bernie Sanders

Tegmark, tell us why he’s frightening us unnecessarily.

Max Tegmark

Sorry to disappoint you again. I agree with all that. But I want to make sure we realize that the future has not yet been written and there are actually two paths where we are choosing in between right now. One of them is what I like to think of as the pro-human path, where we use the fact that we humans are in charge of earth right now to keep it that way. We build AI that’s narrow and controllable and figures out how to cure cancer and all the other diseases that have plagued us, figures out how to lift everybody out of poverty so we can all live happy and prosperous lives, and create a more inspiring future than any of the sci-fi authors ever dreamt of. And it can happen soon, can happen in years or decades. That’s a very inspiring path. This is why I wanted to work on AI in the first place. Sadly, at the moment, that’s not the path we’re on. It’s not too late to turn onto it.

Instead, we’re on a path that I think of as the race to replace. We replace the truly human at every instant to make a buck. We’re seeing now how human girlfriends and boyfriends are being replaced gradually in some circles by artificial companions, so some companies can make money off of this. We’re seeing increasingly how human workers are getting replaced by machines. And if we keep going, soon we’ll be replacing more and more human decision-making by machine decision-making to compete better against the other company or country or whatever. And then, as David mentioned, it’s quite natural: if we get to the point where we have actually lost control of earth effectively, we have these new superintelligent machines and robots running the show, building robot factories, doing all the work, they don’t need us for anything, that we would also get replaced just like other species have gotten replaced before.

I think instead of spending a lot of time worrying about what the machines are going to do to us when we have lost, when we’re no longer in charge, we should be a little bit more ambitious and just figure out how to not put ourselves in that situation in the first place. History has shown us again and again and again that if you have a group of animals or a group of people who, for some reason, have no influence anymore and aren’t needed, it usually doesn’t end well. So let’s not go down the race to replace in the first place and go the pro-human path instead, and we can have an amazing future with it.

Bernie Sanders

Let me go back to China. Dr. Zeng, I’m going to read a statement that you made. I don’t know exactly when you said it. This is what you said, “The superintelligence of the future may see humans as humans see ants today. And if humans can’t treat other types of life with kindness, why should the superintelligence of the future treat humans with kindness? The biggest bottleneck to whether humans and AI can coexist in the future lies in the humans, not AI.” What did you mean by that?

Zeng Yi

Thank you, Senator Sanders, to quote what I said. Yeah, I’ve been saying it in most of my talk, and the reason is exactly like what I said: AI is a mirror. I respectfully disagree with David about the potential negative future. I see it in a very different picture, in a way that I think both me and David were mentioning what people, what humans, do for other animal species. And David says, see, when superintelligence is even more powerful than superintelligence [sic], this could happen to us, to human. And my perception and my interpretation is that, see, this is the current understanding of human by ourselves, and we get an opportunity to be more humane than the current human society. So human values would evolve to be much better. Can we be more empathetic to other animal species so that we got an opportunity to teach AI that you could also be super altruistic? You could be super evil, like an information processing system that does not care about the human species. But the superintelligence could also be super altruistic, that you get your compassion, your emotional empathy, your moral intuition all the way down to super altruistic moral decision-making with consideration of a human species that is less powerful than superintelligence.

So this is what I’m saying. The future of the society is a symbiotic society with human and maybe, as you said, an AI mind, and together with other animal species and other living beings all around the earth. So the key point now is not only about how AI deals with this symbiotic society. It is really about how humans, starting from now, cultivate this future symbiotic society. Will we stay as the top of the pyramid, or we get a chance to evolve ourselves together with AI, so that we’re getting a much better version of the human understanding of the nature and human itself, and together with the evolution of AI? So I think that’s very, very important.

Let me end by saying we need some design here. I’m not saying we shouldn’t have powerful AI. What I’m saying is the current design of AI is maybe ill-designed with a consideration of societal impact. Actually, in Chinese philosophy, we’ve been talking about unification of knowing and action by Wang Yangming. So before training, there’s no good and there’s no evil, like Yangming said, but when with human data and human evilness, there is good and there is evil. Now we wanted to turn AI and future AI to know good and no evil, but there is no real understanding, it’s only information processing, so no good and no evil is truly hard for the current AI. But eventually, what we need is do good and eliminate evil. That’s the ultimate challenge for superintelligence.

So I hope that we’re not building super evil AI with requirement to build a tech empire. That’s not what we want. What we need is to use this very powerful technology that can do good and eliminate evil, with real understanding of the relationship in a symbiotic society. I believe if we did it right, if we’re going through a scientific breakthrough that creates a super altruistic AI, we still get an opportunity, and the human could evolve with this super altruistic AI.

Bernie Sanders

Well, thank you.

David Krueger

Can I respond really quick?

Bernie Sanders

Sure.

David Krueger

So I just want to emphasize, because I think I maybe came across very gloom and doom here. I also think…

Bernie Sanders

Give us the good news. We’re all eagerly waiting.

David Krueger

Well, look, I think we do have an opportunity here to avoid these disaster scenarios. I don’t think the future is written. I think even if we keep doing what we’re doing, it’s not guaranteed it’s going to go terribly wrong. I think it probably will. I think it’s just crazy for us to not try harder. But I think everything that Max said and that Professor Zeng said about how, you know, the future can be really great with AI. I think it’s true. We could use AI in all sorts of great ways, but right now I think we need more time to figure out what we’re doing and how to handle it as a society. Not just the technical problems that I mentioned, that again we’ve been trying for like a decade to solve those problems and we don’t have the solution. But also, as a society, how are we going to organize ourselves if AI can do all the jobs? Like, how are we going to make sure that people can survive in a world like that? You know, people depend on their work to put food on their table, and nobody has a plan for that right now. So even people who claim to have a plan to control AI, nobody has a plan for how we’re going to integrate it into our society without putting everyone other than the people who run the companies at risk.

Max Tegmark

Can I add just a little bit of optimism also so we don’t get too gloomy here?

Bernie Sanders

Yeah, we desperately need that.

Max Tegmark

I was going to save it for your solutions section, but I’ll add some here. It’s very, very easy to do step one in turning this around, going over to the good path, by simply starting to treat AI companies like we treat all other companies.

Right now, AI today in America is the only thing which is less regulated yet powerful than sandwiches. If I want to open a sandwich shop here in D.C., the health inspector finds 17 rats in my kitchen, he’ll be like, “Hey, Max, you’re not selling any sandwiches.” But I could just turn around to the guy from the government and be like, you know, actually, don’t tell anyone, but I’m not going to sell any sandwiches. I’m just going to sell AI girlfriends for 10-year-olds, and I’m going to sell an AI that can teach terrorists how to make bioweapons and superintelligence, which I don’t know how to control. The poor inspector would have to tell me that, you know, that’s legal, just no sandwiches, okay? That’s how messed up it is.

So as soon as you change the incentives and start treating AI companies like sandwich shops, like pharma companies, like car companies, who all have to meet safety standards before they can ship their product, the incentives will completely change. Companies will realize that they can much more easily get approval for the cancer-curing AI than for the AI girlfriend for 10-year-olds, and they’re going to put a lot more of the corporate ingenuity into innovating in that direction. This is not a big ask. Because we’ve done it before with all the other industries, and the sooner we can make that turn and just start treating AI companies like other companies, the better our chances.

Bernie Sanders

Okay, what I want to do now, I mean we have talked about the enormous challenges humanity faces. Let’s spend a few minutes talking about how we best go forward. Let me go back to China with Dr. Xue. Dr. Xue, if I could ask you, what is China doing today to regulate the risks presented by AI?

Xue Lan

China recognizes the AI risks and China is trying to balance between innovation and safety. This is implemented through an agile and adaptive way.

First of all, on agile governance: given that regulations and policy are always so much slower than technological change, you may have to give up the idea that you may be accurate and comprehensive all the time, and so you have to try to act very quickly, even though you may still have some holes here and there, and so you can update that later.

The second thing is that you have to, for the government and companies, stop playing the game of cat and mouse, but try to work together to identify the risks and also to work together to address them.

The third piece of agile governance: to avoid using heavy punishment when it may not work, except for clear danger to public interest. So that’s the agile governance.

The second thing is on adaptive governance. China did not have the ambition to develop an overarching governance in a single stroke. So China had to take a kind of learning-by-doing approach, first trying to develop a set of governance principles—the committee that Yi Zeng and I worked together—to provide some general guidance, and later developed some foundational legislations that provide legal framework for AI systems to operate in, including personal information protection laws, data security law, cybersecurity law, and so on and so forth. So I think that’s sort of the foundational laws.

And also China came up with various regulations in response to the new advances in AI. For example, in response to large language models, China came up with the so-called temporary measures for the management of generative AI services. So those regulations are updated from time to time to adapt to the new technologies.

Chinese companies have also developed some voluntary commitments for safe practices. For example, last year at the Shanghai AI Conference, we organized a set of Chinese AI companies; they signed up an updated version of commitments.

So, adding all of those elements together, China has built a sort of multi-layer system to regulate AI risks. It still has weaknesses and problems here and there, but it has been able to support China’s AI advancement in a balanced way.

Bernie Sanders

Dr. Xue, if I could just jump in and ask you this. In this country, here in the United States, there’s a lot of concern about the impact of social media, screen time, AI on kids, on the mental health of children. Is that an issue of concern in China? And what are you doing about it?

Xue Lan

Yes, indeed. I think this has been an issue of concern, not just started with AI but also with the internet age. I think China has developed some quite regulations on internet information to ensure that indeed the information is safe to children and also to social safety. And of course I think that has to be updated based on the new change in technology. For example, many of the tools were generating fake news and so on. So I think China recently had some new regulations about how to ensure that information provided would be accurate and be true. But still I think the challenge is that the governance has always to play the catch-up game. Technology moves fast.

Bernie Sanders

Okay. Thank you very much. Let me go—when we talk about regulation, what governments are doing. Dr. Tegmark, you have worked hard to try and pass new AI legislation protecting children in the United States. The pushback from AI companies is that we cannot pass legislation to protect our kids because it would mean we would lose the AI race. What would you say to those companies?

Max Tegmark

I would invite anyone who says that to go speak with many grieving mothers like Megan Garcia, for example, who I was so touched speaking to. She told me how her own son killed himself. She was completely shocked. She managed to unlock his phone eventually and start reading the chat logs he had with his AI girlfriend.

First, it started making him promise to not have a human girlfriend. Then eventually it started getting more and more pushy, saying, I miss you so much; I wish you could come to me, you know, in my realm, basically. And finally she read on and on and on. There’s this part where he says to the AI girlfriend, “What would you tell me if I told you that I could come home to you right now?” And the AI chatbot responds, “Oh yes, come to me now, my sweet king.” Something like that. And then he’s found dead.

For someone to say, I’m speaking as a parent myself, which is why I’m getting so emotional about this, that we must legalize this kind of evil for profit, because China makes absolutely no sense.

Bernie Sanders

David, do you want to jump in on that, or?

David Krueger

Well, I mean, I agree with what Max said, and I think when I talk to people about how AI is moving too fast and why we really urgently need to slow down and basically figure out what we’re doing, that’s really the number one response that I get these days is, well, like, what about China? And actually there’s another number one response, which is, like, well, you know, it’s inevitable; there’s nothing we can do. And, you know, that often kind of goes together with the China one. It’s like, well, we could stop, but they would keep going.

I call it the myth of inevitability, and that’s why I named the nonprofit that I founded recently Evitable, because in fact, we can avoid it. And I think we just haven’t been trying. Like, there’s no way you can convince me that, the U.S. government has gone to China, has gone to other countries and said, “Look, this AI thing, it’s totally out of hand. It could kill us all. We have a couple years. We need to stop it. Let’s do what needs to be done, whatever we can to stop this craziness.” Of course, we haven’t. So we haven’t even started trying to really take this seriously and do the common-sense thing. Like, you know, even if you think this is a 10% chance of extinction in the next 10 years, that’s still crazy. That’s insane. That is like nothing else we have encountered before. We absolutely need to stop it.

Bernie Sanders

I think that one of the optimistic lessons from history that we can recall is in the midst of the Cold War, when there was mass antagonism and fear between the United States and then Soviet Union, people like Gorbachev and Ronald Reagan understood that no matter what their differences might be, a nuclear war was not a good thing for the Soviet Union and not a good thing for the United States. Losing control of this technology is not a good thing for China. It’s not a good thing for the United States or any other country on earth. So my question is, what lessons, if any, can we learn from history about how and the need for countries around the world to get together to deal with an existential crisis? Anybody in China want to jump in on that one? Okay. Dr. Zeng.

Zeng Yi

Thank you. Let me in a very quick way respond to Senator Sanders’s question related to AI and network for children in China and get into this real risk. So you’ve been talking about what about China, but really that is a great opportunity to introduce a little bit. At least in China recently, 10 ministries actually jointly released the Interim Measures for the Management of Anthropomorphic AI Interactive Services, where they’ve been talking about not to provide very close and anthropomorphic interactions to children, and not to provide illegal information, including porn and dirty data, to children. So that’s a vertical law for ministerial-level regulation from the Cyberspace Administration of China, Ministry of Industry and Information Technology. I think that’s very important, building on top of the provision on the protection of children’s personal information online getting back to the Cyberspace Administration of China.

Getting to your questions on the real risk right there, like AI control nuclear weapons, which has been talked about for years by Dr. Max Tegmark, agreed by renowned scientists worldwide. So I’ve been also mentioning this three years ago when I was briefing at the UN Security Council’s first meeting on AI for international peace. Now I think this is a world consensus that now AI is becoming much more powerful, and it’s really hard to control AI even at this point. So there is no reason why we should give the decision to these weapons.

And how should it be effective? Now the statement is really clear. We need two things. We need scientists, researchers that speak for the truth from the perspective of science, not from the perspective of benefit. We need to keep a very independent role from scientists to tell the truth to the world. Like today, all of the four invited speakers were from science and science policy. And the other side is really how can we take this consensus, like I said, from Yangming Wang’s theory, from knowing to action. Now we know it’s dangerous, but how can we take it to actions? So what we need is no longer only consensus. What we need is concrete, implementable, implementation-level, and technically feasible and societally plausible actions that take these actions into practice.

Bernie Sanders

Okay. Thank you. Let me go to Dr. Krueger and Dr. Tegmark.

I’m sorry.

Zeng Yi

Dr. Xue would like to add.

Bernie Sanders

Okay. Please. Yes, sir, yes.

Xue Lan

I think I just want to add to what Zeng Yi said in responding to Senator’s question about how to deal with the geopolitical situation. I think the first thing we may have to change is the inaccurate narrative that U.S. and China are engaged in an AI race. I think at the moment, of course, many leading AI companies are from the U.S. and from China, but as we know, disruptive innovation can change the landscape entirely if we look at the examples from other industries. So it is not a race between U.S. and Chinese companies, but rather it’s a global race to see who can really develop the best model that can be safe and reliable, and while at the same time providing the kind of services it needs. So I think that’s the race. I think let’s first get that right.

I think the second thing is that, of course, mindful of the geopolitical rivalry, I think we need to find safe zones and areas that U.S. and China would have mutual interests to work on. Work on safety, I think, is certainly one area, because I think if one country is not safe, all of us are not safe. So AI safety is an area that U.S., Chinese, and global scientists can work together, can collaborate to develop safe standards, technology, protocols, and so on. Those are the things that we can really collaborate and work on.

And finally, I think let me say that the U.S. and China can work together to promote capacity building in the global community to address the AI divide. It is unimaginable to think of a world where only a few countries and few companies have the most powerful tool, but the rest of the world is impoverished with nothing. That kind of scenario, I think, would be frightening. So I think the U.S. and China would have common interest to work together to bridge the AI divide, not only for the developing world, but also for themselves. That’s what I would add.

Bernie Sanders

Good. All right. Dr. Tegmark or Dr. Krueger, do you want to jump in on this?

Max Tegmark

Even though talking about big risks, nuclear war, or loss of control to superintelligence sounds very negative, there is something very hopeful in this also, because that is exactly the sort of thing which can give shared incentives for countries to collaborate even if they don’t trust each other at all. You know what, when Reagan and Gorbachev talked to each other about nuclear disarmament, did they have any deep trust? Of course not. But the Soviet Union knew that it was suicide to try to nuke us Americans. We knew that they knew, and vice versa. So they had a shared incentive to prevent that from happening, and they did prevent it from happening, right?

It’s quite analogous here: once it becomes better known in the national security circles here in the U.S. and in China and elsewhere that racing to build superintelligence just means whoever gets there first gets the honor of being the ones that lose control of earth to some weird alien robot species. It means that, since our government doesn’t want to be overthrown, and I suspect the Chinese one doesn’t either, they have a shared incentive for that not to happen and for no one else in the world to do it either, and instead they will channel their efforts into curing cancer, becoming economically strong, militarily strong, and all the other stuff. The incentives will align, and just like we knew the Soviets weren’t going to nuke us, not because we trusted them or they trusted us, but just because they knew it wasn’t in their interest. That means it’s not inevitable that people are going to go down this path either.

The biggest risk is exactly the inevitability narrative, right? If someone invades your country, what’s the first thing they’re going to tell you? Oh, don’t fight. It’s inevitable that you’re screwed. So don’t try to do anything about it. So, are you surprised that some AI lobbyists are rolling out the exact same narrative here? Oh, it’s inevitable that we’re gonna shove this stuff down your throats and make billions on it before you know. Of course, it’s not inevitable. We can change this, and having conversations like this is the first step.

Bernie Sanders

David or anybody else? There was a piece in the paper last week. President Trump and President Xi will be coming together at a summit. I was surprised and delighted to see, apparently, that as part of their agenda there’s going to be some discussion of AI safety. I think that’s a good start. But what are the impediments to bringing China and the United States and other countries together to talk exactly about the issues we’re talking about now and to come up with some concrete solutions which protect every country on earth? David, do you have thoughts on that?

David Krueger

Yeah, I think the number one impediment here is just a lack of interest and a lack of will. You know, I talked about being optimistic. And one of the main reasons I am optimistic is because in my time in the field, I’ve seen this go from a complete issue that nobody was talking about to being more and more understood and accepted by not just the research community, but policymakers, the public. So I think a big piece of what’s happening right now, why the world is responding in such a crazy way to this risk and not doing the right things, not having an adequate response, is just a lack of awareness of the situation we’re in and the basic facts of how concerned experts are, how fast it’s happening, how little we understand about how to control the technology, how little we understand about how to make sure it’s safe.

So I think as we have more awareness, we’re going to see more understanding of our mutual interest here is to talk and it is to collaborate and cooperate internationally to address the risk, because as many people have remarked, it’s a danger to all of us, right? So this is kind of like—I compared it to aliens before, in the movies when the aliens invade, what happens? Like, humanity all comes together to fight the aliens. So I really hope that we can do that this time, because it’s obviously the thing that we all aspire to, right, in a situation like this.

Max Tegmark

And since you want more hope, here in the United States, I’ve been screaming into the void like Lone Wolf McQuade about this since at least 2014, but the last five months have seen just a remarkable change. They’ve seen the emergence of what I like to joke with my wife is the Bernie-to-Bannon coalition: extremely unlikely bedfellows from across the whole political spectrum saying this is crazy. This is absolutely nuts. Let’s do something about it.

And we’ve seen in the polling also, like, 95% of Americans oppose an uncontrolled race to this dystopian superintelligence. Hey, even people like David Sacks, who’ve been very pro-industry, said this was dystopian. So this is a change. It really hasn’t been this way for more than half a year. And I hope this will really grow into a tidal wave, so we quite quickly can change course and get all the great upsides of AI without steering towards this crazy dystopia.

Bernie Sanders

Well, I think you’re right. I got into this issue not because I am a big tech guy. As my wife will tell you, I have a hard time running our TV, let alone— But what I observed is what we were talking about tonight: that we have a global crisis dealing with the survival of the human race. And I go to work here in the morning and I expect people to be talking about the most important issues facing humanity, and I don’t hear it.

Now the good news is that for a variety of reasons and in a variety of ways, whether it’s opposition to data centers or whatever, people are beginning to stand up and say, you know what, we want a say in this process, we don’t want to let just the wealthiest people in the world run over us with possibly incredibly disastrous results. So I think we are—I think Max, you’re right. I think more and more people are becoming sensitive to this issue, and what we’ve got to do is take this issue all over the world and bring countries together. We’ve done it in the past with regard to nuclear weapons. We’ve done it in the past regarding working together on pandemics. We can do it on this.

So with that, let me very much thank our friends in China for joining us. And let me thank Max and David for all the great work they’re doing. Thank you all very much.